Open Source LLM vs. API: How to Make the Build-vs-Buy Decision for Your AI Product

Open Source LLM vs. API: How to Make the Build-vs-Buy Decision for Your AI Product

The assumption that open source models can't match frontier APIs was true in 2023. In 2026, it isn't. Teams still building on that assumption are making architecture decisions with outdated data, and the cost shows up later when it's harder to fix.

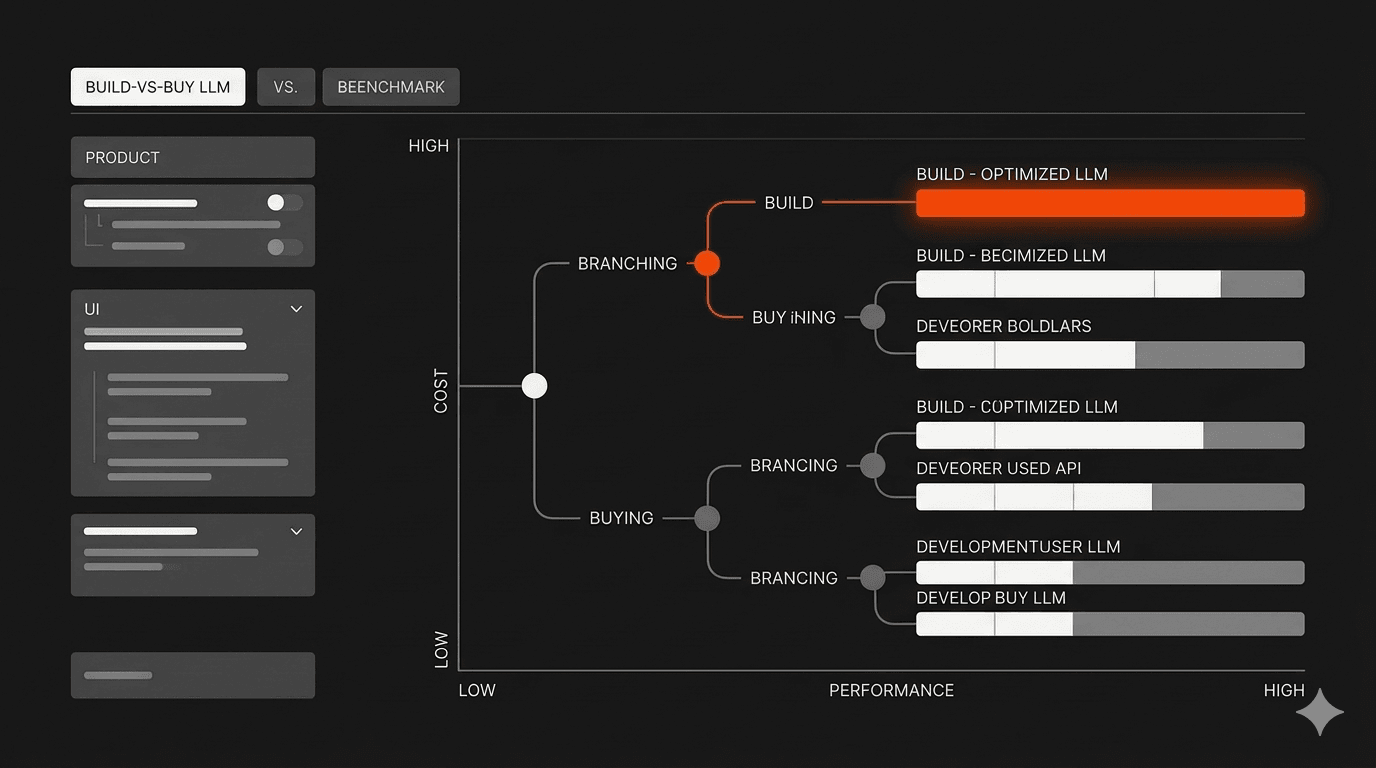

The performance gap between open-source and proprietary models has closed to single digits on most practical benchmarks. Open source LLMs now match or beat closed models on knowledge, math, and even graduate-level science reasoning, with closed models holding a narrowing lead only on production-grade software engineering tasks and the most complex agentic workflows.

This changes the build-vs-buy question. Not by making the answer obvious, but by making the decision more honest. It's no longer "can open source handle this?" It's "does the infrastructure cost, team complexity, and operational risk justify switching?"

Here's the framework we use.

The Performance Gap Is Gone on Most Tasks

What the benchmarks actually show in 2026

The convergence happened fast and across multiple model families simultaneously. That's what makes it structural rather than a one-off.

Five independent open-source model families reached frontier quality within the same release cycle: DeepSeek, Qwen, Kimi, GLM, and Mistral. GLM-4.7 scores 94.2 on HumanEval, the highest of any tracked model. MiniMax M2.5 hits 80.2 on SWE-bench Verified, which tests real GitHub issue resolution, not synthetic coding problems. DeepSeek V3.2 effectively ties proprietary models on general knowledge benchmarks.

For most practical development tasks, the quality difference between a well-chosen open source model and a frontier API is no longer the deciding factor.

Where frontier APIs still have a real edge

Closed models still hold a measurable lead in two specific areas: complex multi-step agentic workflows and the hardest software engineering tasks at scale. On SWE-bench, which simulates real production debugging across large codebases, closed models maintain a lead that open source hasn't closed yet.

If your product is an AI coding agent working across a large monorepo, that gap matters. If your product uses LLMs for classification, summarization, extraction, or chat, it probably doesn't.

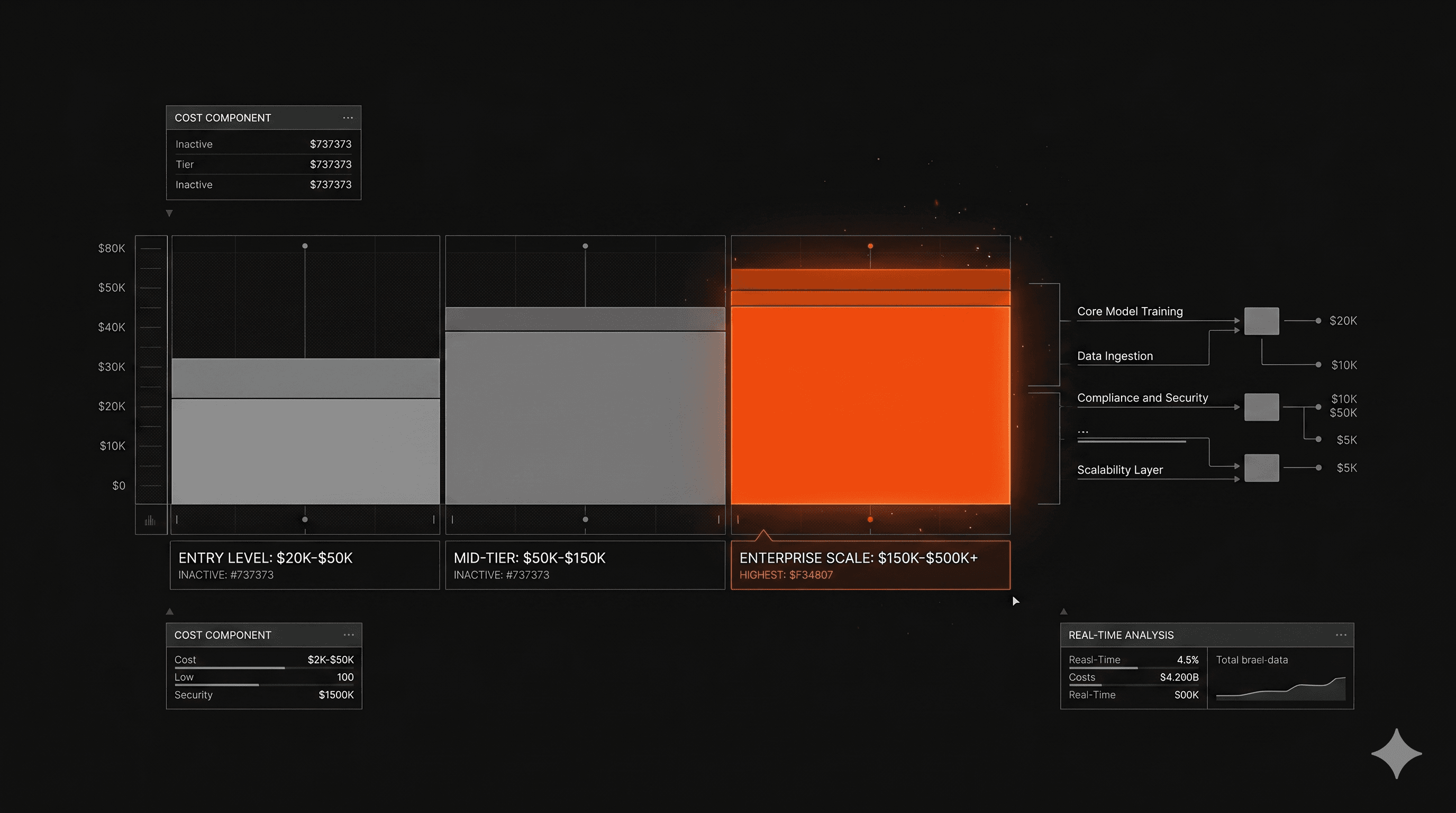

The Real Cost Comparison

When APIs win and the math is clear

At low to moderate token volumes, managed APIs win on total cost of ownership. There's no GPU to provision, no inference stack to maintain, and no MLOps overhead. You pay per token and your engineering team stays focused on the product.

Below roughly 40 to 50 million tokens per month, the fixed infrastructure costs of self-hosting rarely justify the switch. For most early-stage products and MVPs, that threshold is months away from being relevant.

There's also a pricing tailwind. LLM API costs dropped approximately 80% between early 2025 and early 2026. What cost $5 per million tokens now costs under $1. The economics of managed APIs keep improving without requiring anything from your team.

The break-even point for self-hosting

The math shifts at scale. Self-hosted LLMs running on current hardware can reach cost parity with cloud APIs within one to four months at moderate usage around 30 million tokens per day, with per-token costs running 40 to 200 percent lower afterward.

For products processing high volumes of structured, repetitive tasks, that calculus is worth running. Document processing, classification pipelines, and high-volume agent steps are where self-hosting starts generating real savings.

What most teams miss in the calculation

The token cost comparison is the easy part. The harder part is everything else.

A production-ready self-hosted deployment requires GPU provisioning, an inference framework like vLLM or TGI, redundancy and failover infrastructure, monitoring, and an engineering team that knows how to run it. A basic Kubernetes setup with GPU node pools, monitoring, and load balancing adds $200 to $500 per month before accounting for the engineering time to build and maintain it.

That engineering time is the real cost that most spreadsheets don't capture.

The Hidden Costs That Break the Self-Hosting Model

Infrastructure and MLOps overhead

The pattern we see consistently: teams prototype with a lightweight local setup, ship something that works, then discover that production requires a completely different infrastructure investment than development did.

The common trajectory is teams starting with Ollama for local development, realizing production demands more engineering than expected, then either building a dedicated MLOps function or migrating to a managed solution. That migration costs time and money that wasn't in the original estimate.

For most product teams, the question isn't whether they can build self-hosted infrastructure. It's whether that's the best use of their engineering capacity.

Vendor lock-in cuts both ways

The argument for self-hosting often starts with avoiding vendor lock-in. That's legitimate. OpenAI retired 33 models in January 2025 alone, and when GPT-5 launched, changed model routing broke production workflows overnight. Dependency on a third-party API is a real operational risk.

But self-hosting creates its own lock-in. You're tied to the hardware you've provisioned, the inference stack you've chosen, and the team that knows how to run it. Switching models or scaling up requires engineering work every time. Neither path eliminates dependency. It just changes what you're dependent on.

How This Changes the Architecture Decision for Your AI Product

What this means if you're building an MVP

For an MVP, the answer is almost always managed APIs. The goal is validating the product, not optimizing infrastructure. You want to find out if the product works before spending engineering time on how it runs.

We've shipped over 50 AI products, and the week-one architecture decisions that create the most problems later are almost never about model choice. They're about data flow, context management, and how much the product actually depends on model-specific behavior. Getting those right is more valuable than saving on token costs at a stage when token volume is still small.

Using APIs at the MVP stage also preserves optionality. If the product gets traction and volume grows, you have real usage data to inform the self-hosting decision. If it doesn't, you haven't invested in infrastructure for a product that didn't find its market.

What this means if you're building at enterprise scale

At enterprise scale, the calculation changes and the decision gets more nuanced. Data privacy requirements, compliance constraints, and high token volumes can all push toward self-hosting. But so does the organizational complexity of managing it.

For enterprise teams, the more useful question is usually: which parts of your AI pipeline genuinely require self-hosting, and which don't? Most enterprise AI products aren't monolithic. They're pipelines with multiple model calls at different complexity levels.

That's where the hybrid approach matters most.

What We've Learned Across 50+ Products

When we recommend managed APIs

For any product in the first six months of production, and for any team that doesn't have dedicated MLOps capacity, managed APIs are the default recommendation. The quality is there. The operational overhead isn't worth taking on until volume justifies it.

We also recommend APIs for any task where frontier model quality genuinely matters: complex reasoning, multi-step agent workflows, and anything where the difference between a good answer and a great answer has direct product impact.

When we recommend open source

When data privacy is a hard requirement, when token volumes are high enough that the math clearly favors self-hosting, or when fine-tuning on proprietary data would produce meaningfully better results for a specific task. Those conditions are real, but they're less common at early stage than teams assume.

The hybrid approach most production products end up using

The architecture we see work most consistently is tiered routing: a majority of requests go to a budget or mid-tier model, a smaller share go to a premium API for complex tasks, and a self-hosted specialized model handles high-volume, low-complexity work where that's been validated.

We built exactly this kind of multi-model architecture for MeasureAI, combining YOLO/RT-DETR, CNNs, OCR, and ensemble logic across different parts of the analysis pipeline. The full breakdown is here. Each model handled what it was actually good at, rather than routing everything through one approach.

FAQ

What is the difference between open source LLMs and proprietary APIs?

Open source LLMs are models whose weights are publicly released, allowing teams to download, run, and modify them on their own infrastructure. Proprietary APIs like GPT-5 or Claude Opus are closed models accessed exclusively through a vendor's API, where you pay per token and the model runs on their infrastructure.

The practical difference in 2026 is smaller than it used to be on capability. It's larger on operational complexity, data control, and cost at scale.

Is it cheaper to self-host an LLM than use an API?

It depends on volume. Below roughly 40 to 50 million tokens per month, managed APIs are typically cheaper when infrastructure and engineering costs are included. Above that threshold, self-hosting can generate significant savings. The break-even calculation needs to include GPU costs, inference software, redundancy, monitoring, and the engineering time to build and maintain it.

Which open source LLMs are best for coding tasks in 2026?

For software engineering tasks measured against real-world codebases, MiniMax M2.5 scores highest on SWE-bench Verified at 80.2. For general code generation, GLM-4.7 leads HumanEval at 94.2. DeepSeek V3.2 offers strong all-around performance under a permissive MIT license. The right choice depends on your specific use case, context window requirements, and hardware constraints.

The Decision Framework

The open source vs. API question doesn't have a universal answer. It has a math problem, a risk assessment, and a team capacity question layered on top of each other.

The performance parity is real. What's changed is that model quality is no longer the primary reason to choose one path over the other. Infrastructure cost, team complexity, data requirements, and where you are in the product lifecycle now drive the decision more than benchmarks do.

If you're building an AI product and working through this decision, our process page covers how we approach architecture decisions at different stages. The vibe coding post is also relevant context on what production readiness actually requires.

At Imaginary Space, we've run this calculation across 50+ products. The right answer is almost always "it depends on the specific product," and the variables that matter most are usually volume, data sensitivity, and whether your team has MLOps capacity to burn.