How Much Does It Cost to Build an AI Product? A Real Breakdown for 2026

How Much Does It Cost to Build an AI Product? A Real Breakdown for 2026

The honest answer is: it depends. The more useful answer is: most companies are asking the wrong question.

"How much does this cost?" is the right question to ask at the end of a scoping conversation — not before it. The ranges you'll find online aren't wrong, exactly. It's just that a $50K project and a $500K project can both be called "an AI product" and both numbers can be accurate depending on what you're actually building. Treating them as the same question will either waste your budget or set you up to underbuild.

The real cost of an AI product isn't determined primarily by the model you use, the number of features you spec out, or even the size of the engineering team. It's determined by the architecture decisions made in week one — and whether the team building it has made them before. This post breaks down what's actually driving cost, what realistic ranges look like by project type, and where companies typically lose money before they know it.

What You're Actually Paying For (It's Not What You Think)

The model is the cheapest part

This surprises most first-time buyers. The API costs for GPT-4o, Claude, or Gemini are, in the context of a full product build, almost negligible. You're not paying for the intelligence. You're paying for everything around it: the pipelines that feed it data, the architecture that makes it reliable at scale, the integration layer that connects it to your existing systems, and the engineering judgment that keeps it from collapsing in production.

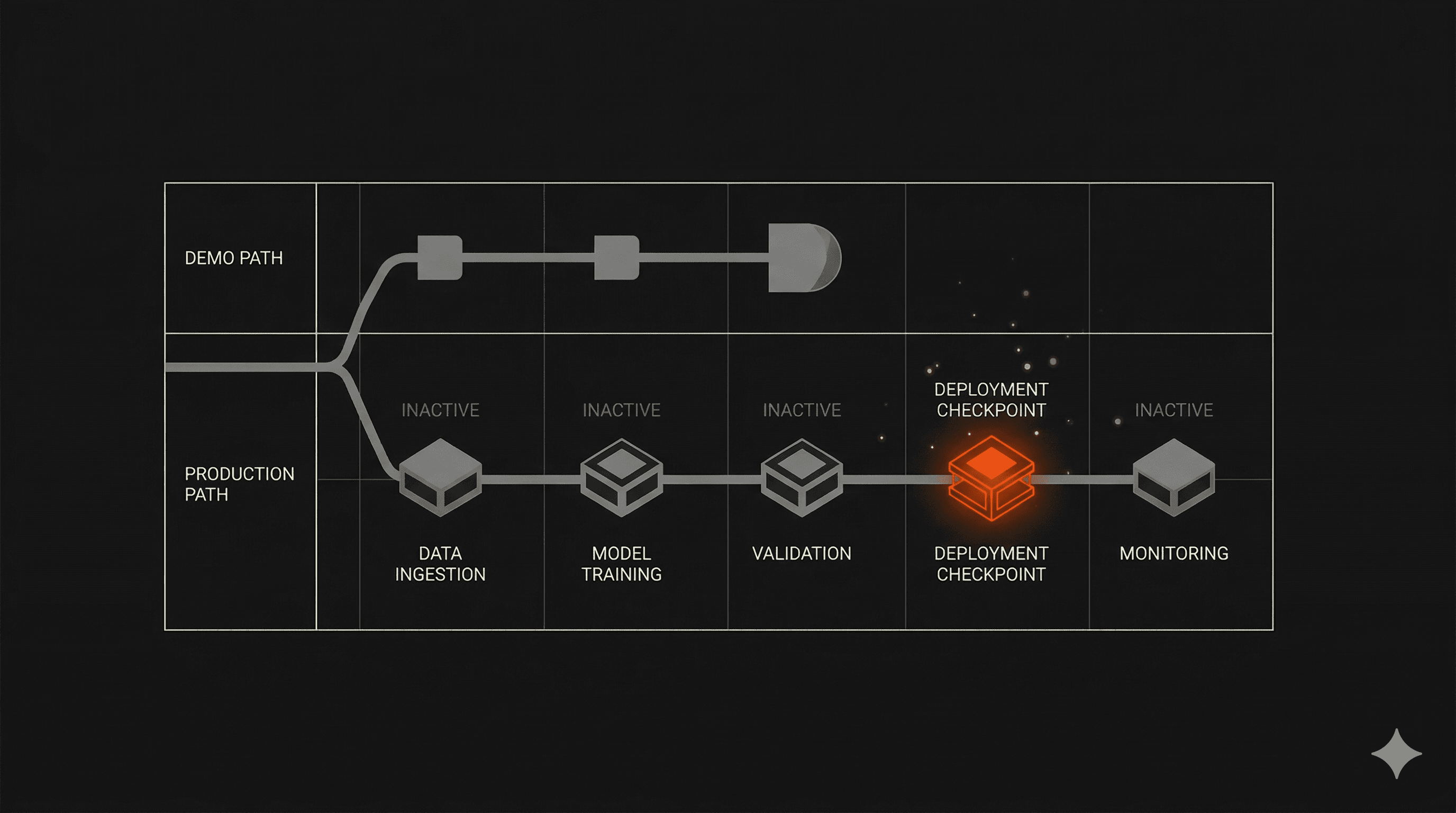

A common mistake is to prototype with a model, see it working, and assume the hard part is done. The demo is the easy part. The hard part is building a system that behaves consistently when 10,000 users hit it simultaneously, when your data has gaps, and when the edge cases that didn't show up in testing start arriving in production.

What actually drives cost

Three things determine where your project lands on the cost spectrum:

Architecture complexity. A single-model product that queries an LLM and returns a response is not the same as a multi-model system that orchestrates YOLO for object detection, OCR for text extraction, and an ensemble layer for confidence scoring. The former is a weekend project. The latter is a serious engineering build.

Data readiness. If your data is clean, labeled, and accessible, the AI layer drops in faster. If you need data pipelines, transformation logic, or custom training sets built from scratch, that cost sits upstream of the AI work itself — and it's frequently underestimated.

Integration scope. A standalone product is simpler and cheaper than one that has to plug into an existing ERP, pass healthcare compliance requirements, or sync with third-party APIs that weren't designed to be connected to an AI layer.

AI Product Cost Ranges — With Context

These aren't wild estimates. They're the ranges that consistently appear across the market for well-scoped projects built by competent teams.

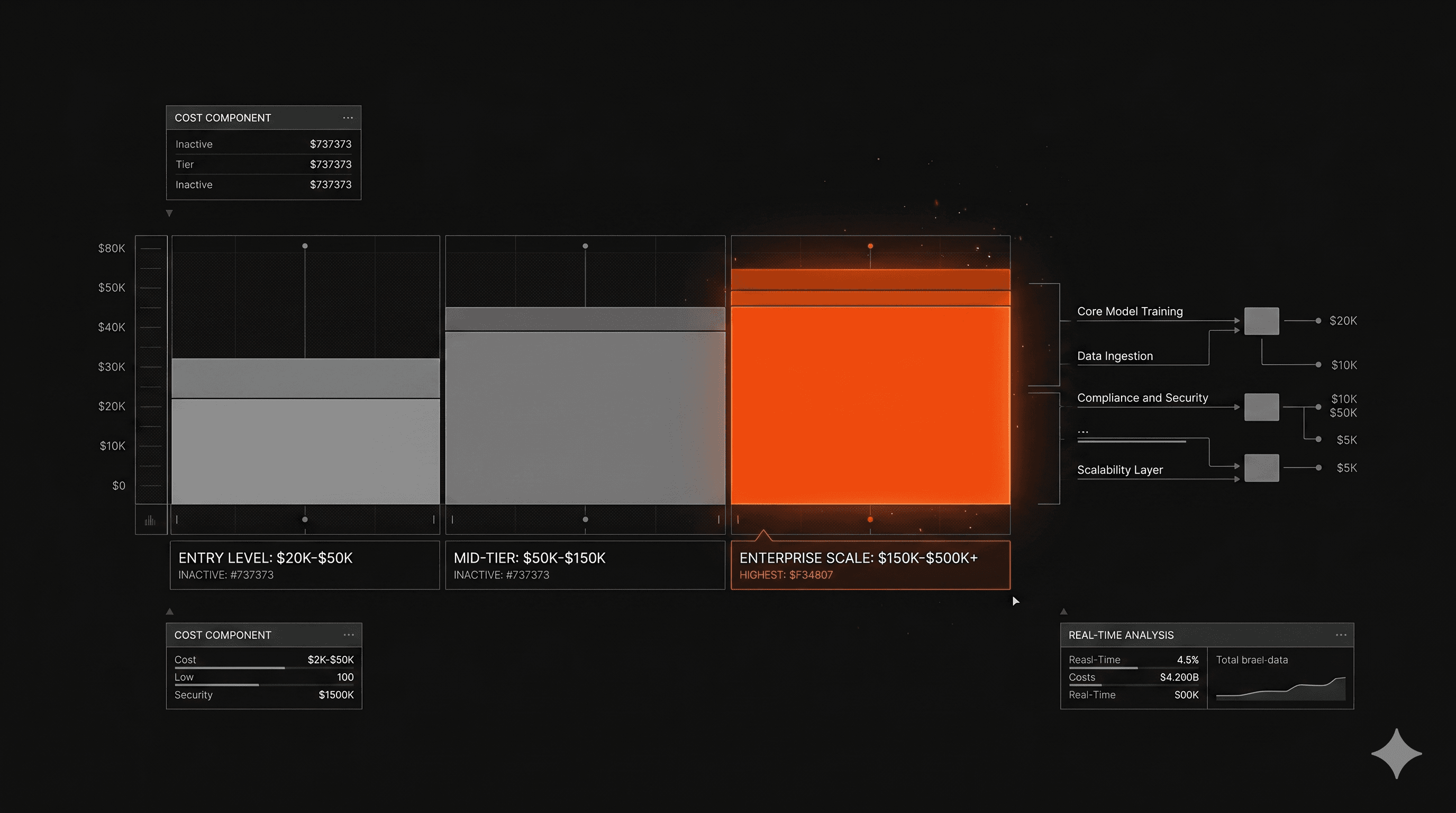

Proof of Concept / Internal Tool: $20K–$50K

This is a single-use-case product designed to validate a hypothesis or demonstrate feasibility. It's typically not production-ready — it runs on controlled inputs, hasn't been stress-tested, and isn't built to survive real user traffic. It answers the question: does this work in principle?

If a PoC is your goal, a 4-week engagement with a focused team can get you there. If you need it to hold up in production, you're in the next tier.

Production-Ready MVP: $50K–$150K

This is where most serious builds land when scoped correctly. A production-ready MVP isn't feature-complete — it's the minimum set of capabilities built to a standard that can survive real users, real data, and real edge cases. It has architecture that scales. It has testing. It has monitoring.

The $50K end of this range assumes a narrow scope, clean data, and limited integrations. The $150K end reflects broader feature scope, more complex AI components, or heavier integration requirements. This is also where timeline matters: a 4-week build and a 6-month build can cover the same scope at very different costs, depending on team structure and decision-making velocity.

We've shipped production-ready MVPs across this range — including AI platforms for construction tech, digital dentistry, and investment operations — consistently within a 4-week cycle. That's not a marketing claim. It's the direct output of making architecture decisions in week one and cutting everything that doesn't serve the core use case.

Enterprise-Grade AI Platform: $150K–$500K+

Once you move into enterprise territory — compliance requirements, multi-model orchestration, high-volume data pipelines, enterprise SSO, audit logging, role-based access control — costs scale accordingly. This is also where ongoing infrastructure and maintenance costs become a meaningful line item.

At this tier, you're not just building a product. You're building infrastructure that integrates with how an organization runs. The $500K+ end of the range reflects multi-year engagements, proprietary model training, or platforms that span multiple departments. According to market data, enterprise-grade AI platforms typically run $140K–$350K for initial builds, with ongoing costs on top.

In-House vs. Agency: Where the Real Numbers Live

What an in-house AI team actually costs annually

Most cost comparisons between in-house and agency development only look at salaries. That's about 40% of the picture.

A functional in-house AI team — one with enough capability to build and maintain a production-grade product — requires at minimum: two senior engineers, one ML specialist, one product manager, and one designer. In the US, that's roughly $600K–$900K in salaries alone, before benefits, equipment, recruiting fees, and the 3–6 month ramp time before anyone ships anything. Specialized AI development agencies typically deliver the same output at $30K–$150K per project, without the overhead.

Why agencies deliver faster ROI on the first build

Institutional knowledge is the factor that most cost comparisons leave out. A team that has built 50+ AI products has already made the expensive mistakes on someone else's timeline. They know which architecture decisions create technical debt at month six. They know what not to build. That knowledge doesn't show up on a salary comparison, but it's the difference between a 4-week build and a 6-month build.

Research consistently shows that outsourcing AI development reduces total costs by 30–50% compared to equivalent in-house builds — and that's before accounting for the speed differential. Every month of delayed AI deployment is revenue and market position you're not capturing.

When in-house makes sense

In-house is the right answer in two specific scenarios: when your AI model is the product itself — your competitive moat lives in the training data, the fine-tuning, or the proprietary architecture — and when you're operating at a scale that justifies a permanent team. If you're building your tenth AI product and you've already validated the market, a dedicated internal team starts to make financial sense. If you're building your first one, it almost never does.

What Makes an AI Product More Expensive Than It Should Be

Scope creep in week one

The most expensive thing in AI development isn't the model. It's building things you don't need yet. The pattern is consistent: a founder or CTO shows up to a kickoff with a list of 40 features, half of which are premature optimizations for problems that don't exist at week four. Every feature you build that isn't core to validating your hypothesis is money spent defending a decision you haven't had to make yet.

The right question at kickoff isn't "what do we want?" It's "what's the minimum we need to learn?" The answer to that question shapes the budget more than anything else.

Architecture decisions deferred too long

Choosing the wrong data architecture in week one doesn't hurt you in week two. It costs you 10 weeks of refactoring at month four when the product is under real load and you realize the foundation doesn't hold. Architecture decisions have compounding consequences. Teams that delay them — because they want to "see how it goes" first — routinely pay three to five times more to fix the result than it would have cost to get it right at the start.

This is why most AI products fail after the demo: not because the model stops working, but because the system around it was designed for a demo, not for production.

Building for scale before you've validated anything

Over-engineering is its own budget leak. RAG pipelines with multi-index retrieval, custom embedding models, real-time data synchronization across distributed systems — all of it justified by "we'll need this eventually." Building for scale before you've found product-market fit is how teams burn $200K on infrastructure that supports a product nobody uses.

Start with what works at 100 users. Build for 10,000 when you have 1,000. Build for 1,000,000 when you have 100,000. The cost of scaling working architecture is orders of magnitude lower than the cost of building for scale that never arrives.

FAQ

Can I build an AI MVP for under $50K?

Yes — if the scope is genuinely narrow. A single-use-case tool, clean input data, no enterprise integrations, and a team that moves fast can ship something real in that range. The risk is building a PoC and calling it an MVP. A true MVP is production-ready: it handles real users, real edge cases, and real load. If your $40K project can't survive its first week of real traffic, it wasn't an MVP. It was a demo.

How long does it take to build an AI product?

A production-ready MVP, properly scoped and properly resourced, takes 4–8 weeks. The teams that take 6 months aren't building something fundamentally more complex. They're making decisions slower, changing scope midway, or carrying technical debt from early architecture choices. Our average across 50+ products is 4 weeks. That's not about moving recklessly — it's about knowing what to cut and making the right calls in week one.

What's the difference between a proof of concept and a production-ready product?

A PoC answers the question: can this work? A production-ready product answers the question: will this hold when real users use it at scale? The gap between them is mostly architecture and testing — not features. A PoC that was never designed to scale will need to be partially or fully rebuilt before it's production-ready. Teams that don't understand this distinction often pay for two builds instead of one.

What ongoing costs should I budget for after launch?

Plan for 15–25% of your initial build cost annually in maintenance, depending on complexity. This covers model updates, infrastructure costs, security patches, and feature iterations based on real user behavior. AI products that use third-party model APIs also carry variable inference costs that scale with usage — typically modest at MVP stage, but worth modeling before you're surprised by a bill.

What This Means in Practice

The budget question matters. But the more important question is: who's making the architecture decisions, and have they made them before?

McKinsey's 2025 State of AI research is direct on this point: only about 6% of organizations are seeing meaningful financial returns from AI, and what separates them from the rest isn't better models or bigger budgets. It's fundamentally different workflows and faster, cleaner decision-making at the product level.

The companies getting real ROI aren't necessarily spending more. They're spending it on the right decisions at the right time — typically in the first two weeks of a build.

At Imaginary Space, we've shipped 50+ AI products across healthcare, fintech, govtech, and construction. Most of them in under four weeks. The cost range is the same as what you'll find anywhere else. What's different is what happens inside those four weeks.

If you're scoping an AI build and want a straight answer on what it should actually cost, our process page is a good starting point.