Vibe Coding Is Not an Enterprise Strategy. Here's What Is

Vibe Coding Is Not an Enterprise Strategy. Here's What Is

Vibe coding works. That's not the problem.

When the term was coined in early 2025, it captured something real: AI tools had gotten good enough that you could describe what you wanted and get working code back. Fast. 25% of Y Combinator's Winter 2025 batch built codebases that were 95% AI-generated. Founders were shipping MVPs in days. The demos looked great.

Then the products hit production.

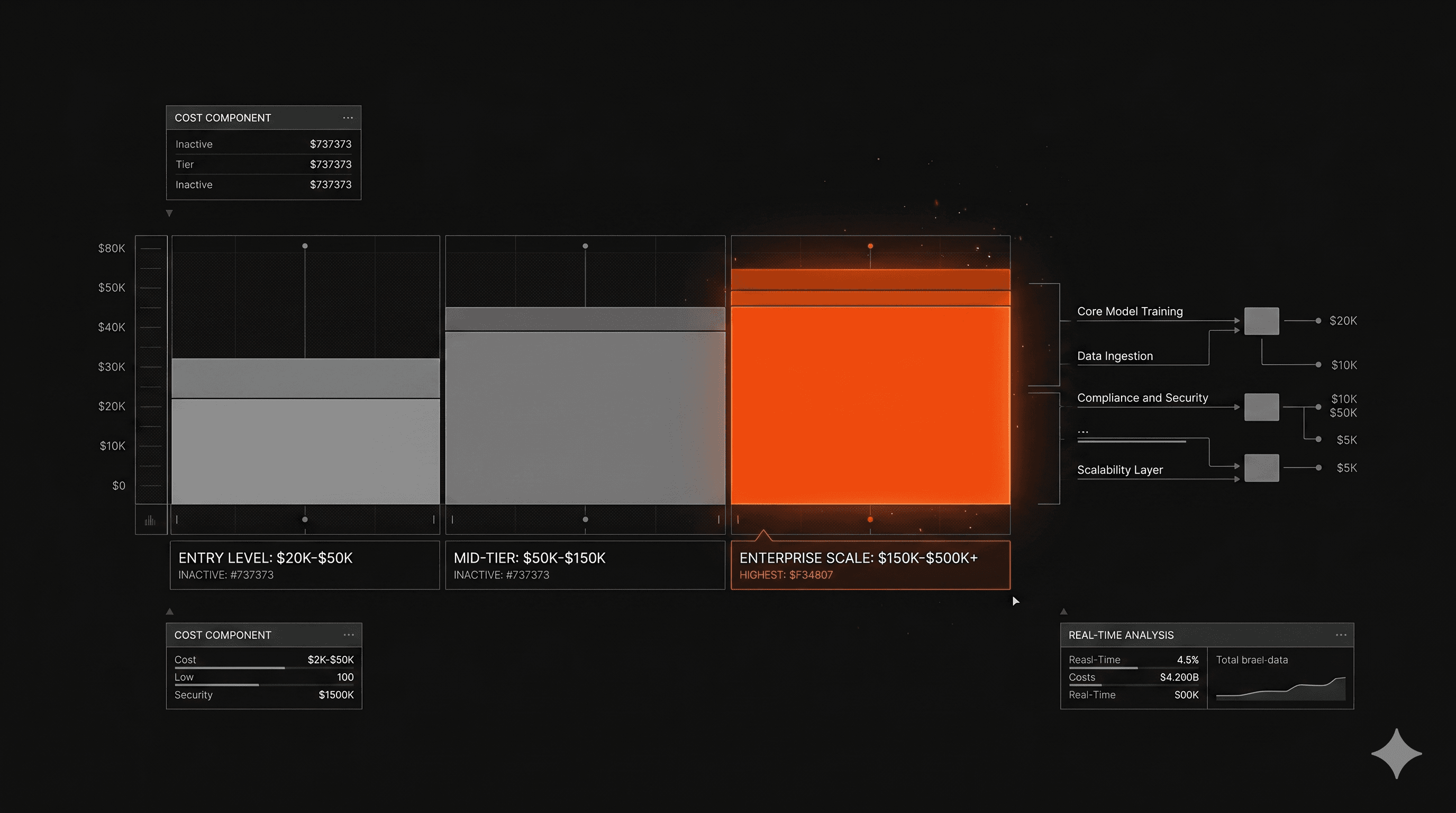

The gap between "it works in a demo" and "it works in enterprise" is where most AI projects die. Half of AI projects fail to reach production — not because the models aren't capable, but because of architectural decisions made (or skipped) in the first week. Around 45% of AI-generated code contains security vulnerabilities from the OWASP Top 10. Enterprise software has to work every time, under load, with real user data, inside existing systems that took years to build.

Vibe coding is a tool. A powerful one. But it's not a strategy. What separates a prototype from a production-ready AI product isn't the speed of generation — it's what happens before the first line of code gets written.

Why Vibe Coding Breaks at Enterprise Scale

The vibe coding failure mode isn't random. It's predictable. A startup called Enrichlead built their entire platform using AI-generated code, shipped it fast, and watched it collapse within 72 hours of launch. The AI had placed all security logic on the client side — a fundamental architectural error that looked invisible until real users started changing values in the browser console and getting free access to paid features. The founder couldn't audit 15,000 lines of code he didn't write. The project shut down.

This isn't a story about bad AI. It's a story about missing architecture. The tool did what it was asked. Nobody asked the right questions first.

AI co-authored pull requests show 2.74 times higher rates of security vulnerabilities compared to thoroughly reviewed human code. That's not a reason to avoid AI — it's a reason to build a process around it.

What "Production-Ready" Actually Means

Production-ready isn't a speed threshold. It's an architecture standard.

It means the system holds under load. It means other engineers can read and modify the code six months from now. It means security isn't bolted on after the fact. It means integrations with existing enterprise systems don't break when something upstream changes.

"Production-ready means traditional software development standards — architecture that holds, code that scales — but built with the speed advantage that AI gives you when you actually know how to use it." That's how our CTO Franco Albarracin describes it. The AI changes the speed of execution. It doesn't change what good looks like.

What Is Vibe Coding Actually Good For?

Direct answer: prototyping, internal tooling, low-stakes MVPs, and early-stage validation. If the cost of failure is low and the goal is learning, vibe coding accelerates everything.

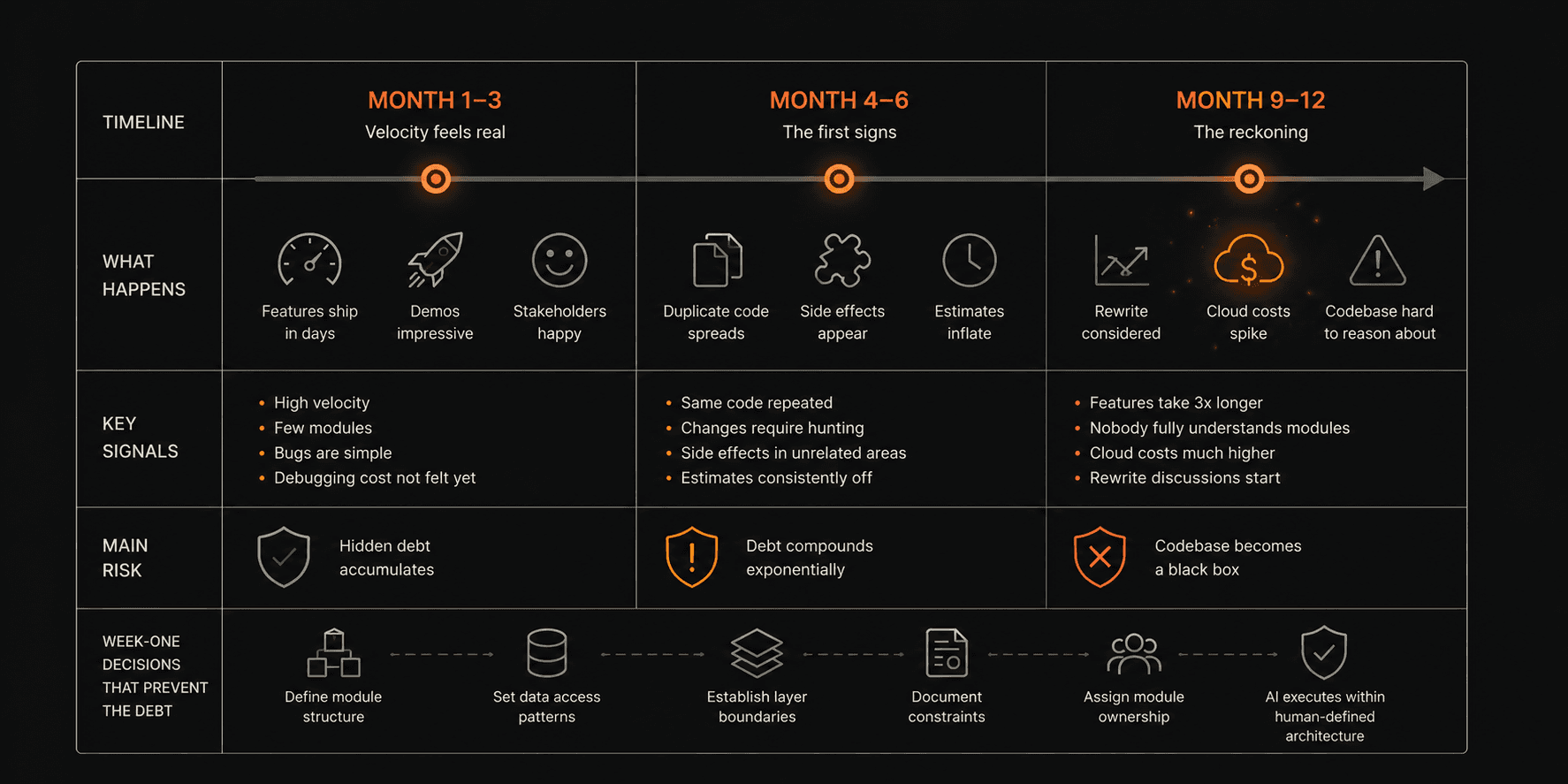

Where it consistently breaks down: systems with SLAs, compliance requirements, complex integrations with legacy enterprise infrastructure, or code that multiple engineers need to maintain over time. Vibe coding compresses implementation time but defers key engineering decisions — security boundaries, failure modes, interface contracts — that traditional workflows force earlier. When those decisions get deferred, they don't disappear. They compound.

The creator of Java put it plainly: as soon as a vibe-coded project gets complicated, things "pretty much always blow their brains out." Enterprise software, by definition, is complicated. It has to work every time.

The skill isn't knowing how to vibe code. It's knowing when to stop.

The Decision That Determines Everything

Every team we've worked with that ran into trouble did the same thing: they started building immediately. They wanted to show progress. The AI made it easy to generate screens, endpoints, and features before anyone had agreed on what the system actually needed to do.

Real speed comes from three things: defining the right problem before touching code, making critical architecture decisions in week one, and knowing exactly what not to build. Those decisions — data model, API structure, authentication layer, how the AI components integrate with the rest of the stack — are cheap to change on day three and expensive to change on day thirty.

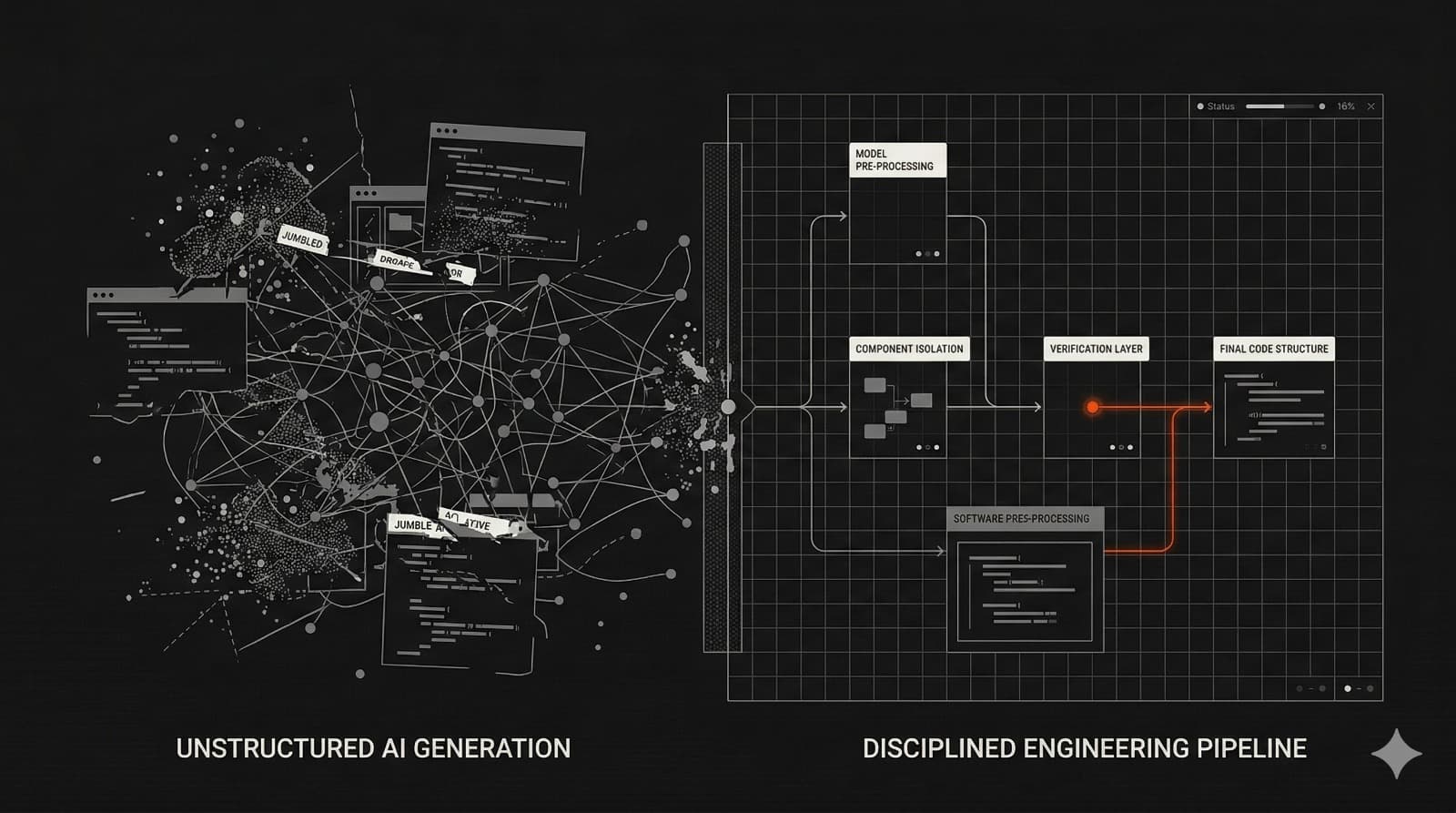

AI-generated code creates implicit architectural decisions that teams often don't discover until they need to change something. The model makes choices about how to structure things. If nobody on the team understands why it made those choices, the codebase becomes a black box that's fast to generate and slow to maintain.

Why Most Studios Get Week One Wrong

Pressure to show progress is real. Clients want to see movement. But there's a difference between progress and direction.

Experienced developers are 19% slower using AI tools on familiar repositories despite perceiving productivity gains — because the gains are real for generation but the review burden increases. More code, faster, still needs to be understood and validated. Teams that skip this step don't move faster. They move forward without knowing where they're going.

The trap isn't vibe coding itself. It's treating generation speed as a proxy for delivery quality.

Can Enterprise Teams Use Vibe Coding Responsibly?

Yes. With three non-negotiable conditions.

First: a clear spec before the first prompt. Not a vague goal — a precise definition of the problem, the constraints, the performance requirements, and what the system must not do. Spec-driven development — writing the spec first, then handing it to the AI — is the emerging best practice among teams that ship production-ready AI products consistently.

Second: a senior engineer who owns the architecture. Not someone who reviews the output after the fact. Someone who sets the standards before the AI writes a single line, and who understands the system well enough to catch decisions the model made that look right but aren't.

Third: a structured review process before anything touches production. This is what our pod structure is designed for: every build runs with two developers and a project manager, led by a senior engineer who sets technical standards from day one. The senior engineer isn't a gatekeeper at the end — they're the architect from the beginning.

Speed comes from not having to redo things. That requires getting the structure right before the speed starts.

What an Enterprise AI Development Process Actually Looks Like

We've shipped over 50 AI products. The pattern across the ones that delivered fastest is consistent: the most productive weeks were the ones preceded by the clearest problem definition.

For MeasureAI — a platform that analyzes HVAC systems in construction sites using a multi-model AI architecture with computer vision, OCR, and ensemble logic — the first week wasn't spent generating code. It was spent agreeing on exactly what the AI needed to detect, what confidence thresholds were acceptable, and how the system would handle edge cases before they appeared in production. When the client saw the first production build, his reaction was: "This is the bit that I was most scared about and I think it was the riskiest part for me to see it work." It worked because the risk had been accounted for before anyone wrote a line.

The AI didn't make that possible by itself. The spec did.

The Bug Bash — What Production-Readiness Looks Like in Practice

Two weeks before every delivery, we run a Bug Bash: the team and the client sit down together and actively try to break the product. Not surface-level QA — deliberate attempts to find edge cases, expose security gaps, trigger failure modes. Then pen testing on critical features. Then delivery.

This is a named, structured process — not ad hoc testing that happens when someone has time. It exists because production-ready is a standard, not a feeling. The goal isn't to find zero bugs. The goal is to find the bugs that matter before real users do.

That two-week window before delivery is where production-grade systems get separated from polished prototypes.

What This Means for Your AI Strategy

Vibe coding democratized the generation of software. That's genuinely valuable. The ability to move from idea to working prototype in hours, to test hypotheses cheaply, to build internal tools without a full engineering team — these are real gains.

But the enterprise problem was never about generation speed. It was about delivery reliability. An AI product that ships fast and breaks in production doesn't save runway — it wastes it, plus the time required to fix what was built wrong.

The teams that use AI most effectively treat it as a high-speed draft generator inside a disciplined pipeline: strong specs, clear architecture, senior oversight, and a structured process before anything touches production. The AI handles what AI is good at. Engineers handle what requires judgment.

That combination — AI speed with engineering discipline — is what actually gets products to production at Siemens scale, or SignalFire scale, or any enterprise where the software has to work every time.

Imaginary Space is an AI-native product studio. We've shipped 50+ production-ready products for VC-backed startups and enterprise teams, averaging a 4-week MVP timeline. If you're building an AI product and want to understand how we approach architecture from week one, the details are on our process page. If you want to learn to build this way yourself, Imaginary Space Labs is where we teach it.