Microsoft Silica: What 4.8TB in a Piece of Glass Actually Means for How We Build Systems

Microsoft Silica: What 4.8TB in a Piece of Glass Actually Means for How We Build Systems

Most of the data the world generates today will be gone within 20 years. Not because nobody wants to keep it — but because the media it lives on degrades, and the process of migrating it to new media every few years is expensive, energy-intensive, and increasingly unsustainable.

Magnetic tapes last 10 to 30 years. Hard drives, roughly the same. The world's data generation is projected to reach 394 zettabytes by 2028 — nearly triple what it was in 2023. The infrastructure for keeping any of that permanently doesn't exist yet.

On February 18, 2026, Microsoft Research published a paper in Nature describing a system that stores 4.8TB of data inside a 2mm piece of glass — with accelerated aging tests suggesting the data would remain readable for more than 10,000 years. The paper is real. The numbers are real. It's also not a product yet, and the path to commercialization has more open questions than the headlines suggest.

Here's what actually matters.

What Microsoft Silica Actually Does

Femtosecond lasers and voxels — the mechanism in plain language

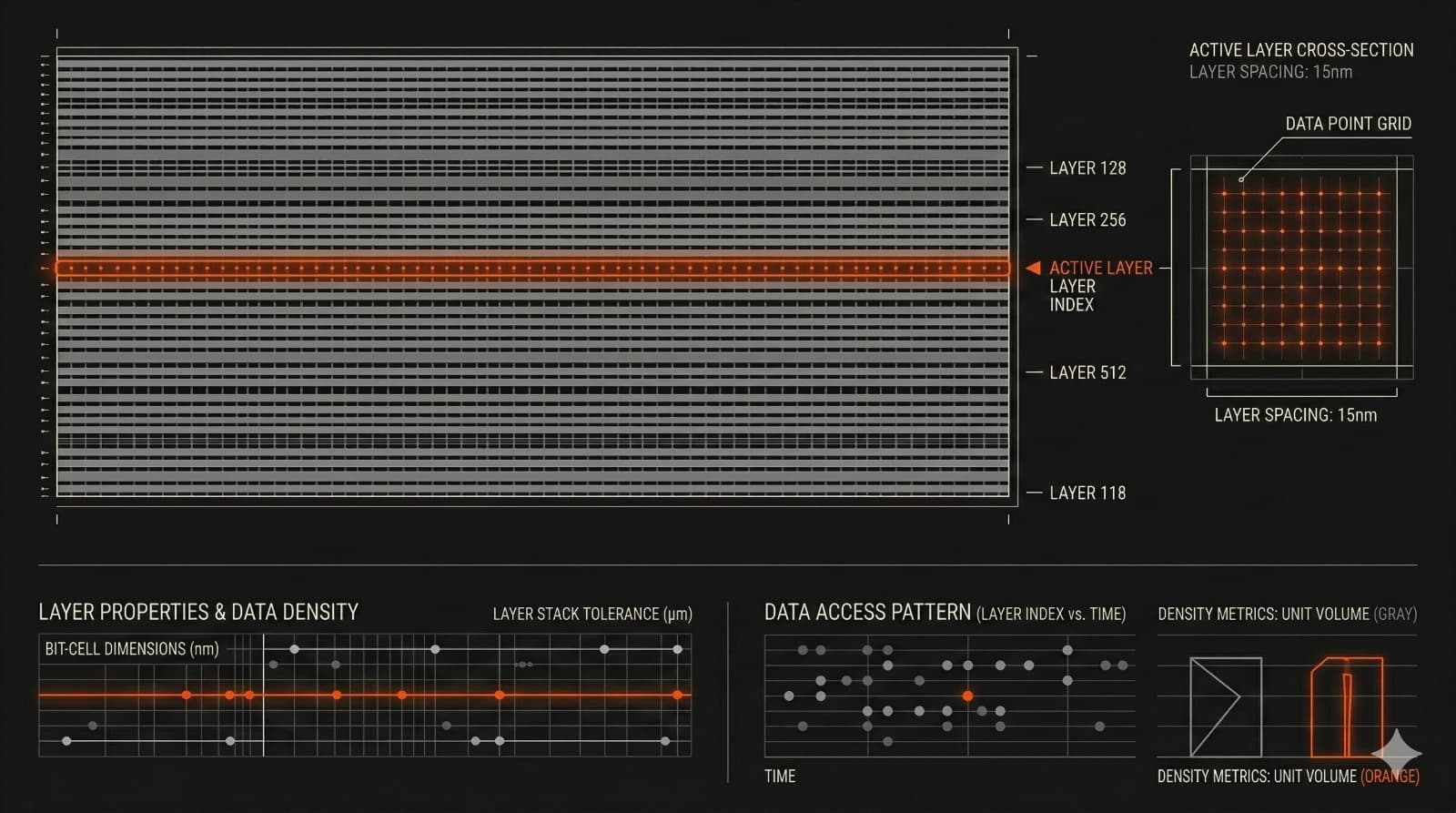

Project Silica encodes data by firing femtosecond laser pulses — each lasting a few quadrillionths of a second — into the interior of a glass plate. Each pulse creates a microscopic structural deformation inside the glass called a voxel: the 3D equivalent of a pixel. The deformation changes how light passes through that point in the glass. An automated microscope reads those changes back. Machine learning models handle noise and optical interference between adjacent voxels during decoding.

The key property is that the data is embedded inside the material itself — not on a surface coating that can oxidize or peel. Glass is chemically stable, resistant to moisture, heat, and electromagnetic interference. The data doesn't sit on the glass. It is the glass.

The numbers that matter: 4.8TB, 301 layers, 2mm

The system published in Nature achieves a data density of 1.59 gigabits per cubic millimeter — storing 4.84TB of usable data in a 120mm square, 2mm thick glass plate across 301 layers. For context, that's roughly the equivalent of 2 million printed books in a piece of glass smaller than a DVD.

Write throughput with a single beam reaches 25.6 megabits per second. With four parallel beams, the team demonstrated 65.9 megabits per second. The accelerated aging tests — repeatedly heating inscribed glass plates to extreme temperatures — suggest data lifetimes exceeding 10,000 years at room temperature.

Those are genuinely impressive numbers. They're also the beginning of the story, not the end.

The Two Systems — and Why Microsoft Is Still Deciding

Birefringent voxels in fused silica — higher density, harder to make

The original Project Silica approach uses high-purity fused silica glass and encodes data as birefringent voxels — microscopic structures that alter the polarization of light passing through them. Different polarization orientations encode different data symbols. Eight distinct azimuth levels per voxel, read using polarization-resolved microscopy.

This system achieves the higher performance numbers: 4.84TB per platter, 25.6 Mbit/s write throughput, 10.1 nanojoules per bit of energy efficiency. The limitation is the material. High-purity fused silica is difficult to manufacture and available from only a small number of sources. Scaling it commercially means solving a supply chain problem before solving a storage problem.

Phase voxels in borosilicate — cheaper, simpler, lower density

The new approach demonstrated in the Nature paper uses ordinary borosilicate glass — the same material in kitchen cookware and oven doors — and encodes data using phase voxels: isotropic modifications that change the material's refractive index. Each phase voxel is written with a single laser pulse. The reading hardware drops from three or four cameras to one.

The tradeoff is density. Phase voxels in borosilicate achieve 2.02TB per platter at 0.678 Gbit/mm³ — roughly 40% of the birefringent fused silica system. The writing and reading hardware is simpler and cheaper. The material is globally available. Extending the technology to borosilicate is the advance that makes commercialization plausible — not because the numbers are better, but because the supply chain and manufacturing complexity are manageable.

Why the design decision hasn't been made yet

Microsoft has not committed to which system to deploy. The two voxel types rely on fundamentally different physical mechanisms, different glass materials, and different reading hardware. Choosing one means defining the entire product architecture — and that decision hasn't been made publicly.

What the Nature paper establishes is that both systems work end-to-end: write, store, read, decode. That's the scientific milestone. The engineering and commercialization decisions come after.

What Is Microsoft Silica Glass Storage Actually Good For?

The honest answer is narrower than the headlines suggest.

Silica is a write-once, read-rarely storage medium. Once data is written into glass, it cannot be modified or overwritten. That's a feature for certain use cases — immutability is exactly what compliance archives, legal records, and scientific datasets need — and a hard constraint for everything else.

The write speed makes the use case even more specific. Filling a single fused silica plate with 4.84TB at current throughputs takes over 150 hours. The faster borosilicate system, with four parallel beams, takes roughly 70 hours for a 2TB plate. Silica is not a general-purpose storage solution. It's cold storage for data that must be preserved reliably for decades or centuries without active maintenance: government records, genomic datasets, financial compliance archives, cultural heritage preservation, long-term scientific data.

The problem it solves is a real one. Every organization managing long-term archives today is running a continuous migration operation — copying data to new media every few years before the old media degrades. That process has real costs in time, energy, and equipment, and it introduces migration errors and data loss at each cycle. Silica eliminates that cycle entirely for data that qualifies for the write-once model.

For data that doesn't — anything requiring updates, versioning, or frequent access — existing storage infrastructure remains the answer.

How Far Away Is This From Being a Product?

Further than February's headlines implied.

The Microsoft Research blog noted that "the research phase is now complete" and that the team is "continuing to consider learnings from Project Silica as we explore the ongoing need for sustainable, long-term preservation of digital information." That language describes a research program that has reached a scientific conclusion — not a product roadmap.

The commercialization gaps are real. Write speeds remain far below magnetic tape for comparable capacity. The decision between birefringent and phase voxels hasn't been made, which means the production hardware stack hasn't been defined. Manufacturing the writing and reading systems at scale is an engineering problem that is separate from the physics problem the Nature paper solves.

What the paper does establish — for the first time — is a complete, end-to-end glass archival storage system that works reliably across writing, reading, and decoding at billions of voxels. That's the scientific foundation. Building a product on top of it is the next problem, and Microsoft hasn't committed to a timeline or confirmed it's pursuing commercialization.

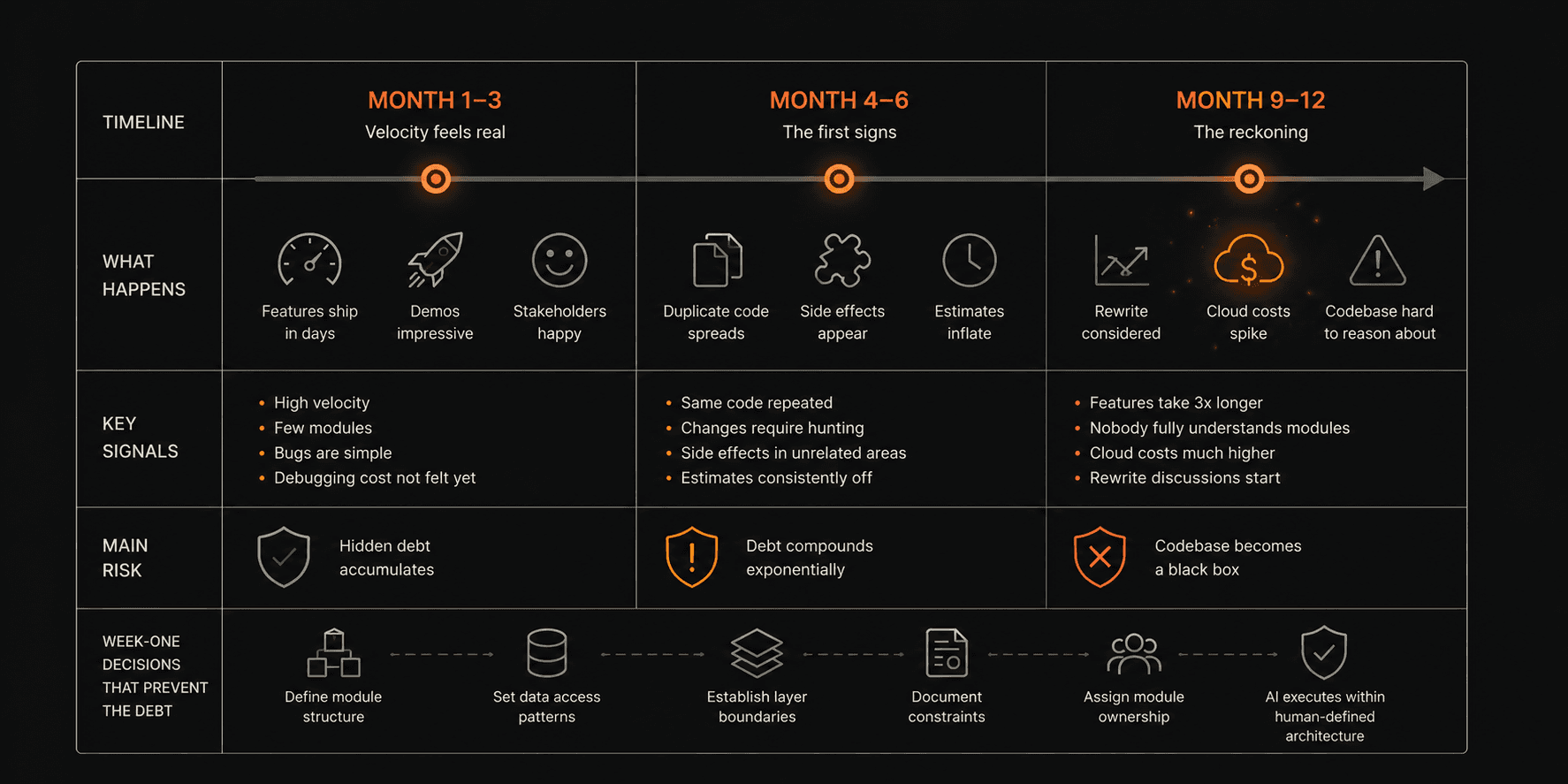

What This Means for Systems Built Today

The data migration problem Silica is designed to eliminate

The significance of Silica isn't the 10,000-year number. It's what that number eliminates.

Every system built today that includes long-term data retention has a migration dependency baked in. The database backups that have to be moved every decade. The compliance archives that require active infrastructure to remain accessible. The scientific datasets that are already being lost because the media they were written to in the 1990s is no longer readable.

A storage medium that doesn't degrade doesn't just reduce storage costs. It changes the architecture of systems that depend on long-term data integrity. Audit logs, clinical records, financial archives, legal documentation — all of these currently require ongoing infrastructure investment just to remain accessible. Silica, if it reaches commercial viability, removes that dependency for the data that qualifies for a write-once model.

What builders and CTOs should actually take from this

The practical implication for anyone building systems today is not to wait for Silica. It's not a product, and the timeline to commercial availability is undefined. The implication is to understand where long-term data integrity is a genuine architectural requirement in what you're building — and to distinguish between data that needs to be updated and data that needs to survive.

For systems like MeasureAI — where construction drawings and takeoff records have long-term audit and compliance value — or CaseBench — where clinical case records carry medico-legal retention requirements — the question of how long data needs to live, and in what form, is an architecture decision made in week one. The media available to answer that question is changing. Silica is the clearest signal yet of where it's going.

What Comes Next

The research phase of Project Silica is complete. The physics works. The end-to-end system has been demonstrated. What comes next — whether Microsoft pursues commercialization, whether another organization builds on the published findings, and what the production hardware stack looks like — remains open.

What's already settled: the data migration cycle that defines long-term archival storage today is not a permanent feature of how information gets preserved. Silica is the most credible published evidence of what replaces it.

Imaginary Space builds production systems for organizations that need their infrastructure to work reliably for years. Understanding what's changing at the infrastructure layer — storage, compute, data architecture — is part of how we make week-one decisions that hold.